Key takeaways:

- SIEM solutions need big data analytics to efficiently process fast‑growing volumes of diverse security telemetry in real time.

- Integrating big data with SIEM helps organizations achieve better threat detection, reduce the number of false positives, improve scalability, and reduce costs on security operations.

- Your team must be ready to address compatibility with existing SIEM features, regulatory challenges, cost and resource constraints, and other issues.

- Outsourcing development and maintenance to an experienced vendor helps accelerate delivery, close expertise gaps, and avoid overloading internal teams.

Modern digital infrastructures produce more telemetry than traditional Security Information and Event Management (SIEM) systems were ever designed to process.

And if a SIEM architecture can’t handle the volume, velocity, and variety of security data, the business that relies on it will face consequences including delayed detection, scalability bottlenecks, and alert fatigue.

To help security teams maintain strong visibility and respond to threats effectively, SIEM vendors and security specialists should consider integrating big data analytics.

Big data analytics introduces distributed storage, scalable ingestion, and advanced ML algorithms. This allows SIEM platforms to:

- Process massive data volumes efficiently

- Correlate complex multi‑source telemetry

- Significantly improve threat detection accuracy

In this article, we explore how big data transforms SIEM capabilities. You’ll discover key benefits, use cases, and architectural considerations, find examples of technology stacks, and learn what challenges to expect during implementation — and how to overcome them.

This article will be helpful to SIEM owners and development leaders who are thinking about enhancing their solutions with big data analytics.

Contents:

- Why modern SIEM needs big data

- Key advantages of SIEM tools enhanced with big data analytics

- Use cases: Where to apply big data–driven SIEM

- From theory to practice: Architectural considerations

- What tools to use: Example of a technology stack

- Implementation challenges and how to overcome them

- How Apriorit can help you with secure SIEM & big data analytics development

Why modern SIEM needs big data

Why might traditional SIEM not be enough?

SIEM solutions help organizations detect threats and meet compliance requirements. To do that, they collect, normalize, and correlate data on security events.

However, digital ecosystems tend to expand. With increased endpoints, users, identities, containers, and cloud workloads come increased amounts of data.

Many large organizations are already noticing that the combined volume, velocity, and variety of their security data exceeds the architectural limits of their SIEM tools.

How can big data analytics help?

Big data analytics enables systematic processing and analysis of large amounts of data and complex data sets.

You can use big data analytics to:

- Capture signals from numerous sources like network logs, endpoints, cloud environments, identity systems, and threat intelligence feeds.

- Store massive datasets of captured information in scalable environments like data lakes or distributed storage systems that are optimized for scale and flexibility.

- Apply advanced analytics and machine learning (ML) models to process big data in near real time.

- Identify subtle anomalies and behavioral patterns that traditional SIEM correlation rules might miss.

- Quickly build analytics dashboards using open-source and cloud tools.

Is there real‑world proof of big data’s impact on SIEM?

Multiple studies and industry evaluations already show that pairing SIEM with big data analytics improves detection accuracy, capacity, and operational efficiency:

- A Fortune 500 financial institution replaced existing SIEM solutions with Anomali’s autonomous unified security data platform that’s enhanced with big data analytics capabilities. As a result, the financial institution reduced critical incidents by 90%.

- In one experimental implementation, an AI‑enhanced SIEM system powered by big data analytics achieved 94.2% detection accuracy with a 73% reduction in false positives compared to conventional SIEM implementations.

- An article published in the International Journal of Scientific Research in Computer Science, Engineering and Information Technology demonstrates that organizations using big data analytics can ingest and analyze logs from diverse sources at a massive scale. When used in sophisticated SIEM implementations, big data analytics helps uncover correlations that indicate emerging threats or hidden compromise paths.

Want to level up your product protection and performance?

Apriorit cybersecurity experts will help you enhance your software, finding the perfect balance between security, innovation, and user experience.

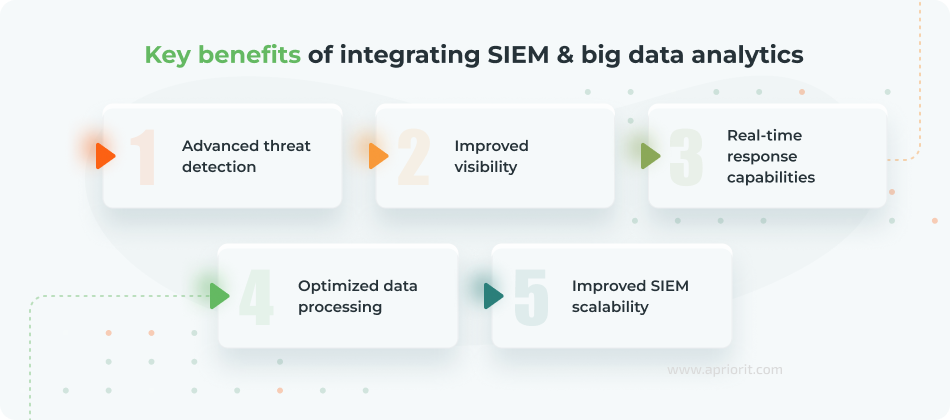

Key advantages of SIEM tools enhanced with big data analytics

Big data analytics promises to transform SIEM solutions, helping security teams detect, investigate, and respond to threats faster.

Let’s explore the main benefits of merging these technologies:

1. Advanced threat detection. Big data analytics allows SIEM platforms to analyze massive datasets using ML models. Such models help identify subtle anomalies and multi‑stage attack patterns that are undetectable through static rules alone.

Some attacks may affect multiple systems over time, making them difficult to detect when each event is viewed in isolation. However, with a big data platform integrated in your SIEM solution, your teams can correlate events over historical timelines to reveal suspicious behavior.

2. Improved visibility. Big data–driven SIEM implementations allow for receiving and processing more telemetry data from networks, endpoints, cloud workloads, and identity systems than traditional SIEM systems.

This helps SIEM solutions gain richer context and accelerate root‑cause analysis, even in complex enterprise environments. With such advanced analytics, your solution can more accurately interpret incidents and better prioritize alerts.

3. Real‑time response capabilities. Many traditional SIEM tools promise real-time analytics. Not all of them can keep that promise when the volume of data significantly increases.

Big data analytics has the ability to solve that challenge by introducing continuous stream processing. This can help your solution detect threats and act upon them as they occur. High‑throughput architectures capable of processing over 1.2 million events per second demonstrate how big data enables SIEM solutions to maintain low‑latency detection even under extreme load.

4. Optimized data processing. Big data modules offer scalable storage and automated analytics. This can help your SIEM solution centralize log retention, enforce data minimization, and automatically generate various reports. Such process optimization capabilities allow for reducing operational overhead related to compliance with the GDPR, industry standards, and other regulatory requirements.

5. Improved SIEM scalability. Big data architectures usually include data lakes and distributed storage, provide horizontal scalability of your SIEM solution, and offer optimized data compression. These capabilities support long‑term analytics and ensure that SIEM solutions keep working efficiently even during spikes in log volume.

Use cases: Where to apply big data–driven SIEM

Before rushing integration decisions, it’s essential to clearly understand where SIEM big data solutions deliver the greatest impact.

Knowledge of the most promising use cases will help your team accurately prioritize development investments and design scalable detection capabilities.

Here are a few industry-specific examples to show how integration of big data analytics and SIEM tools can streamline tasks for security teams:

| Industry | Examples of applying big data–driven SIEM |

|---|---|

| FinTech | ✅ Identify coordinated, low‑signal phishing campaigns ✅ Spot unusual transaction patterns and other anomalies ✅ Detect fraud and account takeover patterns across large volumes of data |

| e‑Commerce | ✅ Detect exploitation attempts against retail system vulnerabilities ✅ Flag anomalous traffic patterns that may indicate attempts to access sensitive data ✅ Protect high‑volume data environments during seasonal peaks |

| Manufacturing | ✅ Analyze historical equipment metrics ✅ Predict equipment failure using ML ✅ Identify anomalies in programmable logic controllers ✅ Flag abnormal cross‑network communications |

| Cybersecurity | ✅ Detect insider threats, data exfiltration, and lateral movement ✅ Centralize log management ✅ Accelerate forensic investigations ✅ Correlate massive telemetry volumes in real time |

| Healthcare | ✅ Streamline HIPAA compliance ✅ Establish advanced insider monitoring ✅ Identify abnormal access to patient records within EHR systems ✅ Detect unusual spikes in record lookups ✅ Identify early ransomware indicators |

Now, let’s look closer at key use cases of SIEM and big data analytics tools that can enhance any industry:

1. Advanced event correlation

Big data analytics can significantly enhance the ability of SIEM systems to correlate millions of events across distributed environments like cloud workloads, identity systems, endpoints, network telemetry.

By connecting disparate signals, advanced SIEM solutions:

- Provide clear context instead of fragmented alerts

- Improve response times

- Highlight attack paths

- Reduce alert fatigue

- Allow for more accurate investigations

2. User and entity behavior analytics (UEBA)

You can use big data analytics as a technical foundation for creating UEBA functionality in SIEM tools. UEBA requires ingesting and processing huge volumes of diverse data on user activity over long time periods. While traditional SIEM architectures struggle with this scale, big data analytics provides distributed storage, parallel processing, and ML algorithms that can make UEBA feasible.

UEBA models flag deviations such as unusual login times, abnormal data transfers, or unusual machine‑to‑machine communication. This helps your SIEM data analytics tool be even more effective at detecting insider threats, compromised accounts, and other types of attacks.

3. Predictive analytics

SIEM enhanced with big data leverages historical patterns, time‑series modeling, and ML‑based forecasting.

Historical depth is crucial for efficient predictive modeling. SIEM solutions that employ relevant AI and ML algorithms empower your security teams to:

- Forecast potential attack paths and vectors

- Assess behavioral risk scores

- Identify vulnerable assets

- Proactively harden systems

- Optimize defensive controls

Implementing predictive analytics shifts the work of your SIEM tools from reaction to proactive defense.

4. Lateral movement detection

Attackers who breach one system rarely stop there. Malicious actors can pivot, escalate privileges, and probe new systems.

Big data analytics evaluates events and signs such as:

- Sequences of login attempts

- Unusual authentication chains

- Process spawning

- Unusual machine‑to‑machine communication across the entire environment

This helps you to identify lateral movement attempts early and prevent attackers from reaching sensitive systems.

5. Insider threat detection

Insider threats come in different forms. Threat actors can be users or employees who may have malicious intent or may make accidental errors. External actors who manage to gain access to a compromised account can also be considered insider threats. Regardless of the motivation behind them, insider threats often blend into normal activity, making them hard to detect with rule‑based SIEM alone.

Big data analytics allows SIEM solutions to connect the dots between unusual user behavior, abnormal resource access, deviations from historical patterns, and other correlated events.

This reveals compromised accounts, privilege abuse, and insider data exfiltration attempts with higher accuracy.

With key use cases in mind, let’s see how exactly your team can ensure SIEM and big data integration. In the next two sections, we dive into architectural and tech stack considerations.

Read also

How to Enhance Your Cybersecurity Platform: XDR vs EDR vs SIEM vs IRM vs SOAR vs DLP

Build a resilient security posture by learning how integrated cybersecurity platforms support faster incident response, better visibility, and coordinated defense across systems.

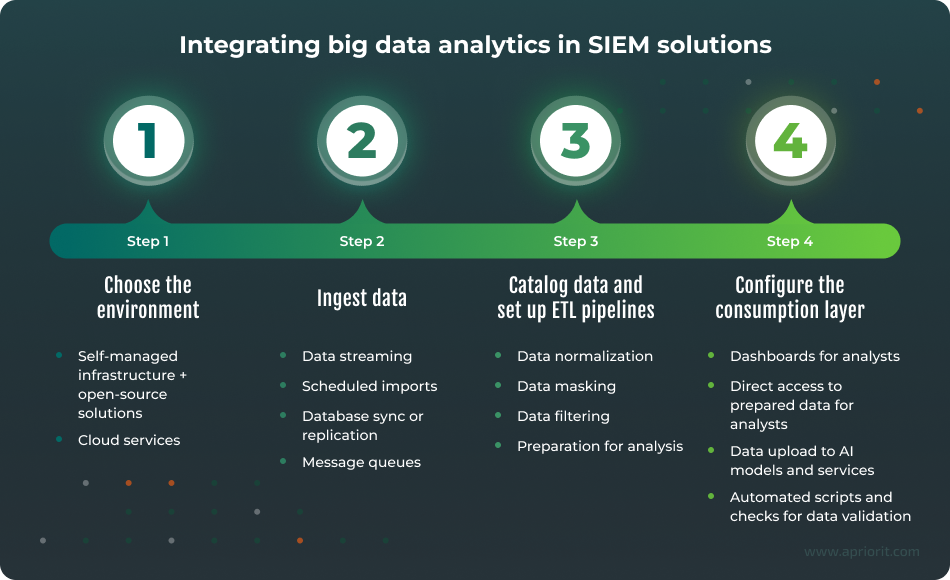

From theory to practice: Architectural considerations

Designing a SIEM solution enhanced with big data analytics requires more than simply adding a data lake or a few ML models. It demands creating a coordinated architecture capable of processing massive datasets and extracting actionable insights.

Below is a practical view (from a developer’s perspective) of how such an architecture works in real-world SIEM implementations:

Step 1. Choose the environment

You can work with big data:

- In your own (self‑managed) infrastructure using open‑source solutions

- In the cloud, leveraging managed ingestion, transformation, and analytics services

Regardless of the environment, the process generally follows the same lifecycle.

Step 2. Ingest data

Data ingestion is the process of collecting data from various sources into a database for storage, processing, and analysis within the organization.

This ingestion layer ensures that the SIEM solution receives complete, timely, and durable streams of information. This is crucial for further data correlation, threat detection, and incident investigation.

When planning the architecture, make sure to define all possible data sources and formats relevant to your project:

- Cloud logs

- Operating system logs

- Network telemetry

- Threat intelligence feeds

- Identity logs

- API events

- DB audit records

- IoT/OT signals, etc.

Common ingestion patterns include:

- Data streaming (real-time security events, firewall logs, endpoint telemetry)

- Scheduled imports (batch exports from legacy systems)

- Database sync or replication (continuous DB ingestion using migration tools)

- Message queues (buffering high-volume event flows)

Step 3. Catalog data and set up ETL pipelines

Once ingested, data must be prepared for analytics. This is why the next step is to catalog the data and run ETL pipelines.

Key tasks include:

- Normalizing data ー Convert logs and telemetry into a unified format.

- Masking data ー Hide or tokenize sensitive information like personally identifiable information, financial data, and health data.

- Filtering data ー Remove noise and redundant fields to increase processing speed.

- Preparing data for analysis ー Structure datasets so they’re optimized for machine learning, correlation queries, and dashboards.

This step ensures that analytics engines, SIEM correlation rules, and ML models operate on clean, consistent, and enriched datasets.

Step 4. Configure the consumption layer

Now, it’s time to carefully plan how security teams and systems will use the data.

Based on the data and tools available, your team can:

- Create dashboards for analysts to monitor real-time security posture, visualize anomalies and incident timelines

- Provide analysts with direct access to prepared data via SQL engines or notebooks

- Feed ML models data for anomaly detection, UEBA, and predictive analytics

- Run automated scripts and checks for data validation, misconfiguration detection, etc.

In practice, these elements work well together: dashboards provide visibility, automated rules generate alerts, and models provide deeper anomaly detection beyond static SIEM rules.

What tools to use: Example of a technology stack

Below are two examples of what tools to use for developing complete SIEM architectures enhanced with big data analytics that run in:

- Cloud environments

- Local (open-source) environments

Whether you’re building in the cloud or in a local environment, the layers will be the same.

1. Cloud‑based architecture (AWS example)

Ingestion layer:

- SQS queues — queueing high‑volume event streams

- Amazon S3 — direct uploading to a data lake (bulk log uploads or archived long-term storage)

- Kinesis Data Streams — real-time ingestion of security telemetry

- Managed Kafka (MSK) — distributed event streaming

- Database Migration Service (DMS) — migrating on-demand SQL databases to the cloud; continuous data synchronization

- RDS, DynamoDB — providing application and operational logs as data sources

Transform layer:

- AWS Glue — ETL jobs for formatting, cataloging, and cleaning data

- AWS Lambda — event-driven transformations or enrichment

- AWS EMR — Spark/Hadoop clusters for large-scale data processing

Load / analytics layer:

- Amazon Redshift — data warehouse for analytics and SIEM queries

- Amazon SageMaker — building and training ML models

- Amazon Athena — SQL queries against S3 data lakes (SQL query for data analysis)

- AWS QuickSight — dashboards and visual analytics for security teams

This architecture enables scalable ingestion, fast transformation, and flexible querying, which is ideal for SIEM systems focused on UEBA, threat hunting, and long-term forensic analytics.

2. Local / open-source architecture

Ingestion layer:

- Apache NiFi – highly flexible flow-based data ingestion

- Apache Kafka – distributed streaming for real-time logs

- Logstash – log ingestion, parsing, and enrichment

Transform layer:

- Apache Spark – large-scale data processing and ML jobs

- Apache Airflow – orchestration of ETL pipelines

- Apache Hadoop, Apache Hive – distributed storage and SQL querying

Load / consumption layer:

- Apache Superset – dashboards and visualization

- PostgreSQL – structured storage for correlation or enrichment data

- Custom AI/ML models – anomaly detection, behavior analysis, threat scoring

This approach provides your team with full control, making a SIEM solution suitable for high-security sectors with strict data residency and compliance requirements.

Enhancing SIEM with big data analytics is as much a data engineering challenge as a security one. Consider using the Open Cybersecurity Schema Framework (OCSF), as it addresses this challenge by standardizing security telemetry, enabling SIEM platforms to fully leverage big data analytics, machine learning, and cross-domain correlation.

Related project

Improving a SaaS Cybersecurity Platform with Competitive Features and Quality Maintenance

Find out how our team contributed to improving a SaaS cybersecurity platform by addressing security challenges, optimizing system performance, and increasing overall platform reliability.

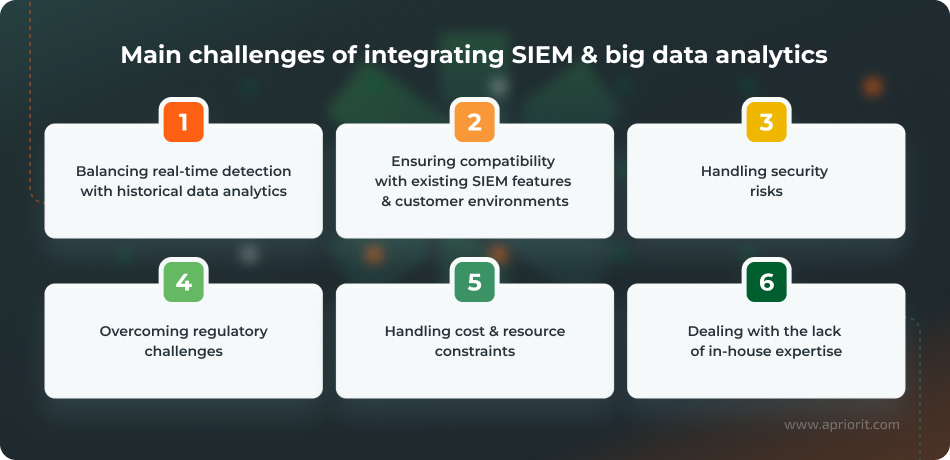

Implementation challenges and how to overcome them

Though integrating big data analytics into SIEM platforms brings significant business benefits, it also introduces technical complexities.

Let’s discuss the most common challenges and practical ways to overcome them:

1. Balancing real-time detection with historical data analytics

A modern SIEM solution enhanced with big data analytics is expected to provide two value streams:

- Real-time threat detection, meaning that stream processors must identify immediate threats such as credential abuse or lateral movement

- Deep historical analysis, meaning that historical engines should support large queries, advanced analytics, and investigation workflows without affecting real-time performance

This separation introduces operational challenges. Teams often encounter latency spikes when real-time pipelines are overwhelmed, especially during attack bursts or log storms. At the same time, historical queries may require significant resource coordination, driving up hardware costs and forcing vendors to consider tiered storage and intelligent caching mechanisms.

To overcome this challenge, adopt a dual‑pipeline architecture:

- Use a stream processing engine (Kafka Streams, Flink, Spark Streaming, Pulsar Functions) to run event correlation and detection logic within milliseconds.

- Use a batch/historical analytics engine (ClickHouse, BigQuery, Snowflake, Apache Druid, Delta Lake + Spark) for long-term investigations, UEBA training, and deep forensic queries.

2. Ensuring compatibility with existing SIEM features and customer environments

Introducing a big data analytics layer risks breaking existing SIEM capabilities.

Event collectors, correlation engines, dashboards, and alert workflows that customers rely on may not align with the new architecture. Your customers may need to migrate search languages or adapt correlation rules to a new data model. This puts pressure on solution architects to design transition paths that minimize disruption.

How to overcome this challenge:

- Implement a stable abstraction layer to prevent exposure to raw backend changes.

- Maintain two parallel engines during migration: the legacy engine for existing rules and dashboards, and a new engine for high-throughput analytics and advanced detections.

- Keep schema versions and allow old schemas to coexist.

- Implement rule versioning so older detections continue running.

- Use schema evolution tools like Avro, Protobuf, and Iceberg.

3. Handling security risks

Distributed systems introduce new attack surfaces: message queues, object stores, metadata catalogs, etc. Also, since big data platforms store and process sensitive log data, misconfigurations can lead to data exposure or tampering.

How to overcome this challenge:

- Apply data masking and pseudonymization to sensitive fields during ETL.

- Use end-to-end encryption at rest and in transit.

- Implement role‑based access control and attribute-based access control.

- Segment data lakes and logs by sensitivity and business unit.

- Design automated compliance checks, hardened cluster configurations, and secure defaults.

4. Overcoming regulatory challenges

Regulated industries require strict data governance and data privacy guarantees. Expanding data stores increases the risk of data leaks and non-compliance with the GDPR, DORA, HIPAA, PCI DSS, and other legal and regulatory frameworks.

How to overcome this challenge:

- Use automated retention and deletion policies.

- Implement audit dashboards (Kibana, QuickSight, Superset) to support compliance reporting.

- Use data minimization and anonymization to store only necessary security telemetry.

5. Handling cost and resource constraints

Big data SIEM architectures require skills, infrastructure, and tuning, which make them costly. Licensing, cloud computing powers, and long-term storage can significantly increase long-term expenses.

Optimization challenges are not always financial. In many real‑world implementations, constraints may come from non‑budget requirements, such as:

- A need to minimize development effort and resources

- Demand to reduce operational overhead

- Requirements for low‑code or no‑code operation so that analysts without programming skills can use the system effectively

- Limitations caused by legacy architectures: for example, maintaining compatibility with older on‑premises formats while migrating workloads to the cloud

These factors often shape architectural choices as much as direct cost considerations. The right decision always depends on the project’s specific context.

How to overcome this challenge:

- Adopt managed services like AWS EMR, Azure HDInsight, or BigQuery to reduce operational overhead.

- Use open-source stacks to offset licensing costs.

- Plan phased rollouts focused on high‑value datasets to maximize ROI early.

- Implement efficient data reduction techniques like event sampling and log deduplication.

6. Dealing with the lack of in‑house expertise

Implementing big data SIEM requires cross-disciplinary skills and experience in:

- Cybersecurity development

- Big data engineering

- AI & ML development

- Working with distributed systems

- Quality assurance and security testing

Adopting big data analytics will transform not only your SIEM product but your organization as well. Teams must adapt new roles and processes to align with the new architecture. Another challenge is to find talents with relevant experience and knowledge. This is accompanied by expenses for recruiting and onboarding.

How to overcome this challenge:

- Invest in training and upskilling for security and data engineering teams.

- Use co-managed SIEM/SOC services or augmentation partners.

- Standardize pipelines using repeatable templates, IaC, and documented runbooks to lower complexity.

- Consider outsourcing certain technical tasks to qualified vendors who can offer quick access to rare talents and expertise your in-house team lacks.

Read also

Telemetry in Cybersecurity: Improve Security Monitoring with Telemetry Data Collection (+ Code Examples)

Discover how structured telemetry data helps you detect threats faster and improve security monitoring across complex systems. Explore practical approaches used in modern security architectures.

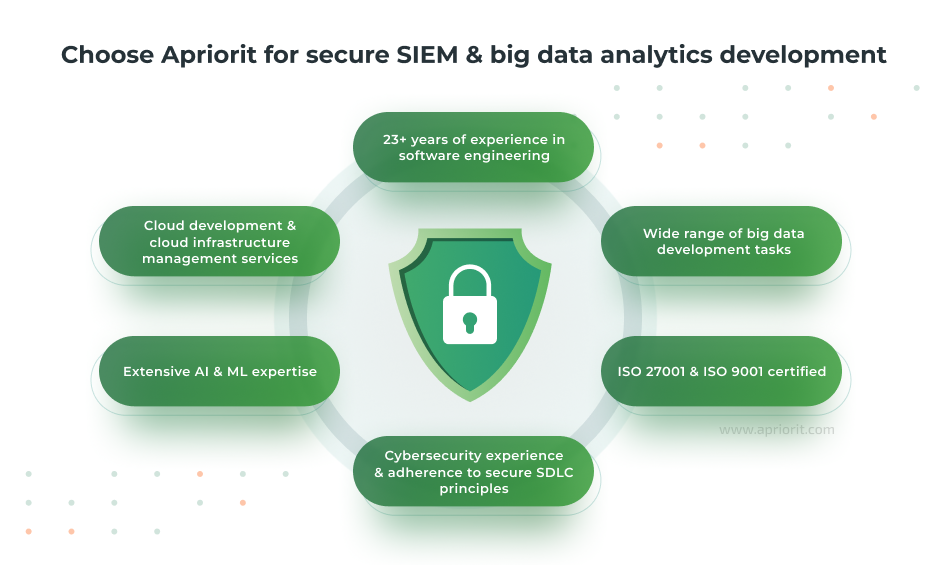

How Apriorit can help you with secure SIEM & big data analytics development

Enhancing a SIEM platform with big data analytics requires deep engineering expertise, secure development practices, and experience designing scalable ecosystems. This is exactly where Apriorit brings exceptional value.

With 23+ years of experience in software engineering, we offer strong cybersecurity, AI and ML, and big data development skills. Apriorit helps merge these technologies for both cybersecurity vendors and businesses wanting to enhance their corporate security architecture.

Our teams are ready to assist you with development tasks of any complexity:

- Building a cybersecurity solution from scratch or enhancing an existing platform for SIEM, SOAR, EDR, MDR, threat intelligence, log processing, etc.

- Developing cloud-native platforms that scale while controlling infrastructure costs

- Creating data lakes, data warehouses, and ETL pipelines

- Implementing real-time data streaming and processing mechanisms

- Creating dashboards for advanced analytics and business intelligence

- Ensuring robust data protection at every stage of development by following secure SDLC principles

- Building log collection and normalization mechanisms along with real-time and batch analytics pipelines

- Developing AI-driven functionalities like ML-based anomaly detection and advanced UEBA modules

- Designing cloud-native architectures and distributed systems

- Conducting security testing to identify vulnerabilities and efficiently fix them

Big data analytics holds the potential to transform SIEM platforms, allowing for faster and more intelligent threat detection. However, to gain the promised benefits, it’s crucial to implement big data analytics with the right architecture, security practices, and engineering expertise.

Apriorit helps organizations turn this vision into production-ready systems.

Our teams will help you enhance an existing SIEM solution with ML-based analytics, build a high‑throughput big data backbone, modernize legacy correlation engines, or design a new cloud-native security product.

Looking to modernize your SIEM platform?

Work with Apriorit’s experts to implement secure and high-performance big data analytics capabilities that scale and support long-term business growth.

FAQ

What is the role of big data analytics in SIEM solutions?

<p>Big data analytics can significantly enhance SIEM platforms by enabling them to process, correlate, and analyze massive volumes of security telemetry in real time. Big data offers deeper historical context, making investigations and threat hunting significantly more effective.</p>

<p>Integrating big data analytics is key for the evolution of SIEM platforms, as it helps improve detection accuracy, reduce false positives, and identify advanced threats. For example, big data analytics assists with detecting insider misuse, lateral movement, and multi-stage attacks that traditional correlation rules alone would miss.</p>

How long does it take to integrate big data into an existing SIEM platform?

The timeline varies widely based on infrastructure complexity, data volume, and modernization goals. Depending on the scope of work, implementing secure big data analytics might take from a few weeks to several months.

What technologies can I use to upgrade SIEM capabilities?

<p>The best technologies for you will depend on your project goals and needs. The most commonly adopted technologies include:</p>

<ul class=apriorit-list-markers-green>

<li>Apache Kafka, AWS Kinesis, or managed Kafka services for streaming systems</li>

<li>Apache Spark, Flink, or cloud-native equivalents for distributed processing</li>

<li>Amazon S3, Azure Data Lake, Google BigQuery, Snowflake, or Hadoop HDFS for data lakes and warehouses</li>

<li>Elasticsearch or OpenSearch for search and analytics</li>

<li>Spark MLlib or SageMaker for ML development</li>

</ul>

Can I modernize legacy SIEM tools without a full rebuild?

Yes. Many organizations successfully extend legacy SIEM tools by adding big data components around them instead of replacing the entire platform. For example, your team can add stream processing layers for pre-correlation filtering or replace slow storage engines with distributed, low-cost back ends.

Why outsource SIEM improvements?

<p>Outsourcing provides access to specialists in big data engineering, secure development, ML-based detection, and large-scale platform modernization. Such skills are difficult and expensive to maintain in-house.</p>

<p>A dedicated engineering partner like Apriorit accelerates delivery, reduces architectural mistakes, ensures compliance with security standards, and helps optimize long-term operational costs. This allows internal teams to focus on core product and business goals instead of complex infrastructure engineering.</p>