Key takeaways:

- The EU AI Act makes compliance part of how you design and build AI systems.

- The risk level assigned to your AI system determines your obligations, with high-risk systems requiring strict lifecycle controls and detailed documentation.

- Companies targeting the EU market must comply, regardless of where they are based.

- Starting early with structured assessments and planned engineering processes helps reduce regulatory risk, delays, and costly redesigns.

The EU Artificial Intelligence Act, or AI Act, sets clear rules for how AI systems placed on the EU market or put into service in the EU (or whose outputs are used in the EU) must be designed, developed, and deployed. Unlike voluntary guidelines, the AI Act directly affects engineering decisions, from architecture and data pipelines to model testing and release processes. Non-compliance can lead to fines, blocked market access, and delayed product launches.

In this article, our experts explain what the AI Act means in practice. You’ll learn who it applies to, how AI systems are classified by risk, key compliance obligations, and practical steps your teams can take to align development with the Act’s rules.

This guide will be useful for CTOs, product architects, and product owners who are looking for actionable insights on how the AI Act affects product strategy, development workflows, and market readiness.

Contents:

- What is the EU AI Act?

- Who needs to comply with the EU AI Act?

- AI risk categories explained

- Enforcement timeline and transition periods for the EU AI Act

- Penalties for non-compliance under the EU AI Act

- How the EU AI Act changes AI software development

- How to prepare existing AI products for AI Act compliance: A practical step-by-step framework

- AI Act compliance checklist

- How Apriorit implements compliance-by-design for AI systems

What is the EU AI Act?

The European Union AI Act is the world’s first comprehensive regulatory framework governing artificial intelligence systems. It establishes legally binding requirements for how AI systems used in the EU should be designed, developed, deployed, and maintained.

At its core, the AI Act is designed to make sure that AI systems on the EU market are:

- Safe and robust

- Transparent in their operation

- Respectful of fundamental rights

- Properly governed throughout their lifecycle

Previous EU digital regulations focused primarily on data protection (such as the GDPR) or cybersecurity. So what does the EU AI Act regulate? The answer is AI functionality itself, particularly when AI systems affect safety, fundamental rights, or access to essential services.

The regulation introduces a risk-based approach, meaning not all AI systems are treated equally. The level of required controls depends on how much potential impact a system has on individuals or society. Let’s see whether you need to comply with the EU AI Act.

Who needs to comply with the EU AI Act?

The EU AI Act is a European Union regulation, but its reach extends beyond companies physically located in the European Union. If your AI system is placed on the EU market or its outputs are used within the EU, the regulation may apply regardless of where your company is based.

The AI Act also does not apply to every company using AI internally. Instead, it targets defined categories of economic operators involved in developing, placing, or operating AI systems in the EU market. These include:

- Providers — companies that develop an AI system or place it on the EU market under their name or trademark

- Deployers (users) — organizations that use AI systems in professional contexts within the EU

- Importers and distributors — entities that make AI systems available in the EU

- Authorized representatives — EU-based legal entities appointed by AI providers established outside the EU to act on their behalf under the AI Act

However, the AI Act does not regulate all AI activity uniformly. There are several types of AI systems that don’t have to comply with the AI Act:

- AI systems used purely for scientific research and development that aren’t used commercially

- Certain military and national security applications

- AI systems that are already governed by sector-specific EU regulations in highly regulated domains (though they may still face additional AI Act obligations depending on context)

- Some open-source models, depending on how they are distributed and used

Another important nuance is role-based responsibility. A company that modifies or substantially changes an AI system may become a “provider” under the AI Act, even if it did not originally develop the model. Also, fine-tuning, rebranding, or integrating third-party AI components can tighten compliance obligations.

You can use this checklist by the Future of Life Institute to determine whether you have obligations under the EU AI Act.

Understanding your role is the first step toward determining your compliance scope. From there, classifying your AI system’s risk category will determine the depth of your technical and organizational requirements. Before diving into the timeline for EU AI Act compliance, let’s see on what level your system needs to be compliant.

AI risk categories explained

The EU AI Act classifies AI systems into four risk levels. A system’s risk level determines how heavy the compliance burden will be, from no specific obligations to a complete prohibition.

Table 1. Risk categories under the EU AI Act

| ✅ Minimal risk | ℹ️ Limited risk | ❗ High risk | 🚫 Unacceptable risk |

|---|---|---|---|

| ✔️ Productivity-enhancing AI ✔️ No automated decisions affecting rights ✔️ No use in high-impact sectors ✔️ Human retains full control ✔️ Low potential for societal harm | ✔️ AI interacting directly with humans ✔️ Generative AI producing synthetic content ✔️ Potential risk of user deception ✔️ No direct automated rights-impacting decisions ✔️ Primarily informational or assistive | ✔️ AI affecting fundamental rights, safety, or access to essential services ✔️ Automated decision-making about individuals ✔️ Used in critical or regulated sectors ✔️ Biometric or sensitive data processing ✔️ Listed in Annex III or embedded in regulated products | ✔️ Manipulative AI that distorts user behavior ✔️ Social scoring by public authorities ✔️ Exploits vulnerabilities (children, disabled persons) ✔️ Certain real-time biometric identification in public spaces ✔️ Clear threat to fundamental rights |

| Examples: 🟢Grammar correction 🟢Meeting summarization 🟢Spam filters 🟢Dashboard personalization | Examples: 🟡Chatbots 🟡Virtual assistants 🟡Deepfake generators (with labeling) | Examples: 🟠Medical diagnostics 🟠Credit scoring 🟠Recruitment screening 🟠Border control 🟠Driver monitoring | Examples: 🔴Social scoring systems 🔴Emotion recognition in schools 🔴Untargeted facial recognition in public surveillance |

The AI Act does not regulate AI generically; instead, it regulates AI based on its impact.

The higher the risk category:

- The more thorough the documentation requirements

- The stricter the data governance controls

- The stronger the monitoring and oversight mechanisms

- The greater the regulatory exposure

Correctly identifying your system’s risk category is the starting point for any realistic compliance roadmap. Let’s see how much time you have to prepare your systems for the EU AI Act.

Build AI Act–ready systems with confidence

Apriorit helps product teams design, modernize, and secure AI solutions in line with EU AI Act requirements, from architecture to deployment and ongoing monitoring.

Enforcement timeline and transition periods for the EU AI Act

The AI Act entered into force in August 2024, but its obligations are being phased in over several years. This staged approach gives companies time to align their systems and processes with regulatory requirements.

Table 2. EU AI Act compliance roadmap

| Date | Phase | What changes |

|---|---|---|

| February 2025 | Prohibited AI ban | Deployment of systems categorized as “unacceptable risk” is no longer allowed |

| August 2025 | Governance obligations | Obligations for general-purpose AI models begin to apply |

| August 2026 | Core compliance for most AI systems | ❕High-risk AI obligations become mandatory ❕Transparency rules for limited-risk AI apply ❕Governance and accountability requirements are enforceable |

| August 2027 | Extended high-risk provisions are fully applicable | ❕Additional high-risk sector requirements enforced ❕Conformity assessment procedures required ❕EU database registration (where applicable) ❕Strengthened oversight mechanisms |

To understand how this roadmap affects your company, you need to determine how your AI system is classified under the regulation. Its risk level defines your scope of obligations, documentation requirements, and conformity procedures. Let’s take a look at what consequences you might face for failing to comply with the AI Act.

Penalties for non-compliance under the EU AI Act

The EU AI Act gives national supervisory authorities and the European Commission significant enforcement powers. Consequences of non-compliance are not limited to corrective warnings: non-compliance can lead to substantial financial penalties, market restrictions, and mandatory system withdrawal from the EU market.

Like other major EU regulations, the AI Act ties fines to global annual turnover, meaning exposure is not limited to EU revenue. The severity of penalties depends on the type of violation.

Table 3. Consequences of non-compliance with the EU AI Act

| Risk type | Impact |

|---|---|

| Financial | ❌ Fines up to €35M or 7% of global annual turnover for prohibited AI practices ❌ Fines up to €15M or 3% of global turnover for violations of core obligations ❌ Fines up to €7.5M or 1% of global turnover for providing incorrect or misleading information |

| Market access | ❌ Prohibition on placing the AI system on the EU market ❌ Mandatory withdrawal or recall of non-compliant systems ❌ Suspension of deployment until compliance is restored |

| Operational | ❌ Emergency system redesign or remediation ❌ Mandatory audits and documentation updates ❌ Delayed product launches or feature rollbacks ❌ Increased engineering and compliance workload |

| Reputational | ❌ Public enforcement decisions and regulatory scrutiny ❌ Loss of partner and customer trust ❌ Reduced competitiveness in regulated sectors |

| Strategic | ❌ Restricted expansion into EU markets ❌ Higher due diligence barriers in partnerships and M&A ❌ Increased long-term compliance costs |

Addressing AI Act requirements early is significantly less costly than responding after enforcement begins. Next, we focus on what becomes legally binding for developers of high-risk AI systems and how this shifts everyday engineering decisions.

Read also

Why OCSF Is Shaping the Future of Cybersecurity Integration

Accelerate your security strategy with a clear, hands-on guide to implementing the Open Cybersecurity Schema Framework. Our experts will help your team unify event data and enhance automation across tools.

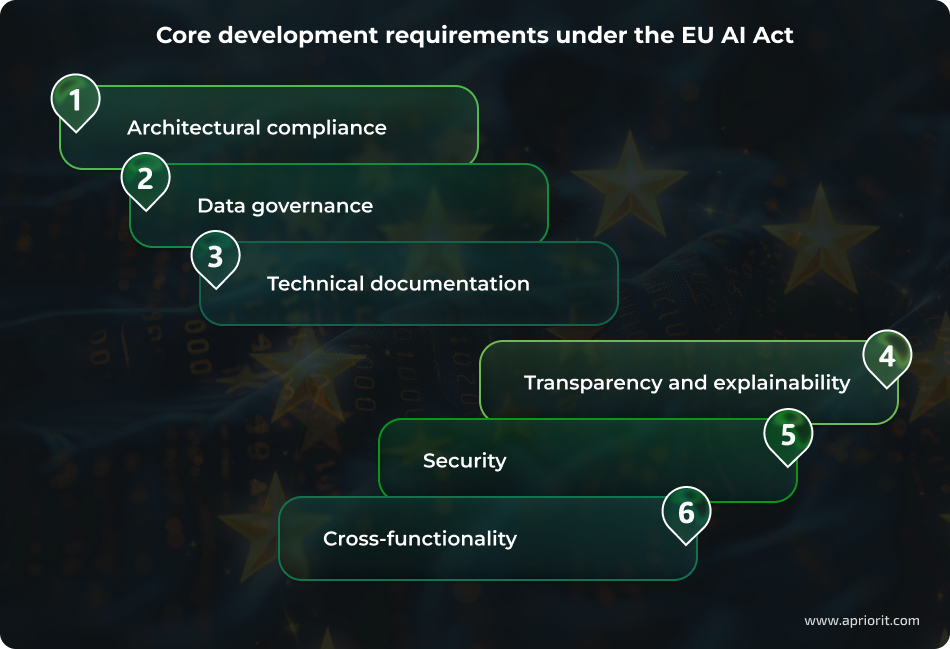

How the EU AI Act changes AI software development

Before outlining specific changes, it’s important to clarify their scope. The requirements that we describe below primarily apply to high-risk AI systems under the EU AI Act.

If your product falls into the minimal or limited risk categories, many of these obligations will not be mandatory. However, they may still serve as best practices, especially if your system might later support higher-risk use cases.

Risk classification becomes an architectural constraint. Your team must determine how your system will be categorized before finalizing architectural, automation level, and model choices. This step is necessary because the assigned risk level is directly related to validation depth, oversight mechanisms, and release readiness. This means compliance must be considered during early product planning. Beyond scalability and accuracy, a system’s architecture now has to account for regulatory exposure.

For example, if your AI system supports hiring decisions, you may need to limit full automation and introduce mandatory human review instead of auto-rejecting candidates. In healthcare-related use cases, you may need to add detailed logging, explainability features, and stricter validation workflows to meet high-risk requirements. In some cases, you might even need to adjust feature scope or system autonomy to align with the requirements for your risk class.

Data governance turns into a formal compliance obligation. Organizations must be able to demonstrate that training, validation, and testing data are:

- appropriate for the system’s intended use

- traceable to their sources

- assessed for quality and bias risks

From an engineering perspective, this means you can’t treat data like an informal experiment anymore. You need clear data lineage, proper dataset versioning, and reproducible pipelines. If someone asks where the training data came from, how it was processed, or why the model behaves a certain way, you should be able to answer without guesswork.

Technical documentation becomes structured and audit-ready. Documentation is already part of the SDLC. However, under the AI Act, it must become significantly more detailed and structured, and it must be constantly maintained. It’s no longer enough to document for internal knowledge sharing; records must be complete, traceable, and ready for regulatory review.

Your team must thoroughly document and regularly update:

- System purpose

- Design decisions

- Performance metrics

- Limitations

- Risk mitigation measures

To keep everything aligned, your team will have to integrate documentation workflows directly into CI/CD pipelines and change management processes.

Transparency and explainability must be designed in. Transparency requirements mean that AI-powered systems must clearly disclose their use of AI and provide meaningful information about how outputs are generated. Your users have to understand when they are interacting with AI, and system limitations must be communicated in a structured way. Apart from this, explainability and oversight mechanisms need to be considered at the design stage.

With the AI Act, pure black-box deployments become harder to justify, so AI products will need built-in logging, clear explanation mechanisms, and defined human review processes as part of their architecture.

Security expands to cover AI-specific threats. The AI Act explicitly covers AI-specific attack vectors that include data poisoning, adversarial manipulation, and model misuse. This is why security extends beyond infrastructure protection to include model robustness and data integrity safeguards. This reinforces the integration of AI development with secure SDLC practices, similar in spirit to the broader software security expectations introduced by the EU Cyber Resilience Act. Threat modeling must now account for risks unique to AI systems, not only traditional software vulnerabilities.

Compliance becomes cross-functional. The AI Act integrates compliance responsibilities directly into engineering, data, product, and security workflows. Regulatory readiness cannot be handled solely by legal teams, as it rests on how systems are designed, documented, tested, and monitored in practice. As a result, organizations may need:

- Clearer governance structures

- Defined ownership of AI lifecycle controls

- Coordinated review processes that align product decisions with regulatory obligations from the outset

For high-risk AI systems, the EU AI Act impact on AI development becomes a regulated engineering process with mandatory controls across the entire lifecycle. To help you move from theory to action, we outline a practical step-by-step framework to prepare existing AI products for AI Act compliance in the next section.

Read also

How to Share IT Compliance Responsibilities at Different SDLC Stages

Build trust with customers and regulators by integrating compliance requirements into your development lifecycle and creating software that meets strict industry standards. to enhance VAD platform security and performance by adding new capabilities to their platform and needed quality support for existing features.

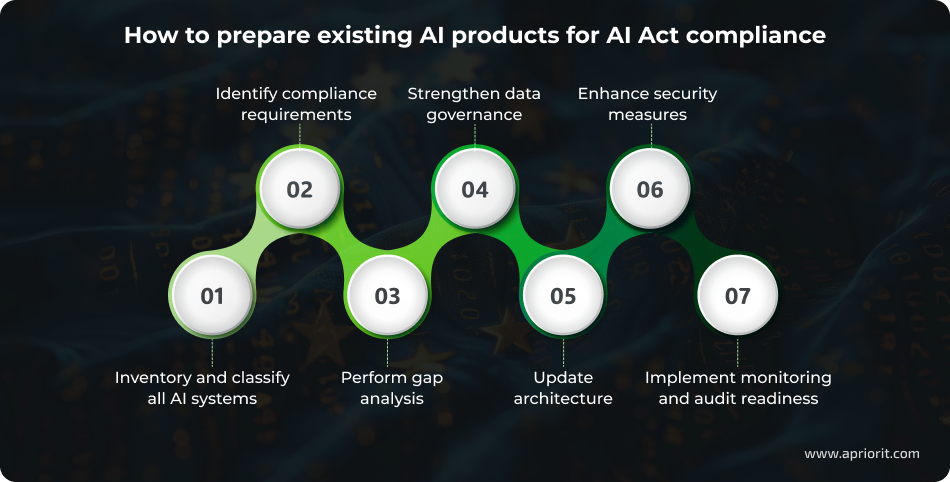

How to prepare existing AI products for AI Act compliance: A practical step-by-step framework

For organizations developing AI systems that may fall into higher risk categories, compliance should be built into the engineering strategy from the start, not treated as a final legal check before release. For existing AI products, preparation requires coordinated audits and adjustments across architecture, data pipelines, validation practices, documentation, and operational monitoring.

Based on our experience working with regulated software and security-critical systems, we recommend approaching AI Act readiness as a structured modernization process. Below is a practical framework you can apply whether your AI system is already deployed or nearing release.

Step 1. Inventory AI systems and reassess risk classification

Start by identifying all AI-driven components across your product portfolio. This includes:

- Obvious ML models

- Embedded scoring engines

- Ranking systems

- Biometric modules

- Third-party AI services integrated into your solution

After inventorying your systems, you need to reassess each system’s risk category under the AI Act. As we mentioned before, misclassification may either expose you to regulatory risk or lead to over-engineering. The key is to evaluate the system’s intended use, level of automation, impact on individuals, and sector-specific context.

Apriorit expert tip: Classify use cases, not just models. The same model can fall into different risk tiers depending on how it is deployed and what decisions it influences.

Step 2. Identify applicable obligations and define compliance scope

After classification, map the relevant AI Act obligations to each system. Not all requirements apply universally. Transparency duties, documentation depth, human oversight mechanisms, and conformity assessment procedures vary depending on risk level and deployment context.

By the end of this step, you should have a clearly defined compliance scope that outlines:

- Which obligations apply

- Which teams are responsible

- Which systems require priority remediation

This step helps your team implement only those controls that are absolutely necessary and save development time in the long run.

Apriorit expert tip: Treat compliance scoping as a technical mapping exercise, not a purely legal review. Engineering input is critical to determine what is realistically required.

Step 3. Perform a technical and documentation gap analysis

After you have mapped out all obligations, conduct a structured gap analysis against AI Act requirements. This analysis should cover:

- Current architecture

- Data practices

- Validation workflows

- Monitoring capabilities

- Documentation artifacts

As a result of this review, you should understand which gaps can be addressed through procedural updates and which require architectural changes. You might encounter weak points, such as undocumented training datasets, insufficient logging, missing validation records, unclear intended-use definitions, and limited human oversight mechanisms.

The outcome of this step should be a prioritized remediation roadmap that distinguishes quick wins from structural blockers.

Apriorit expert tip: Legacy AI systems often suffer from documentation drift. If documentation does not accurately reflect current model properties, retrace changes before building forward.

Step 4. Improve data governance and model validation practices

AI Act compliance heavily depends on demonstrating control over data quality and validation processes. You need to review existing pipelines for traceability, reproducibility, and bias monitoring mechanisms.

Strengthening data governance might include:

- Dataset versioning

- Formal validation criteria

- Representativeness checks

- Clearer separation between training, validation, and testing environments

Model performance metrics must align with the system’s intended use and be consistently documented.

The goal is not to rebuild your ML pipeline but to ensure that AI outputs can be explained, reproduced, and defended under legal scrutiny.

Apriorit expert tip: If you cannot reproduce a model output exactly, you have both a technical and a compliance issue. Reproducibility is foundational to regulatory readiness.

Step 5. Update architecture for transparency, traceability, and explainability

Many AI Act obligations cannot be satisfied through documentation alone. You might need architectural updates to support decision logging, explanation layers, or human-in-the-loop controls. The objective is to make the system’s behavior observable and reviewable without fundamentally redesigning it.

This step often includes:

- Implementing structured logging for model outputs

- Defining escalation workflows for human review

- Exposing meaningful system limitations in user interfaces

In some cases, adding interpretability mechanisms or structured metadata tracking may be necessary to ensure traceability of automated decisions.

Apriorit expert tip: Incremental transparency improvements are often more realistic than replacing a model. Start by improving observability and auditability of your data pipelines and system outputs.

Step 6. Strengthen security, robustness, and misuse protections

AI systems introduce unique risks such as adversarial manipulation, data poisoning, and model abuse. Evaluate whether your system is resilient against these threats and whether appropriate monitoring controls are in place.

For your team, security hardening may include:

- Input validation safeguards

- Abuse detection mechanisms

- Access control reinforcement

- Secure model storage

- Structured incident response procedures

You should also incorporate robustness testing into quality assurance processes to assess how the model behaves under edge cases or unexpected inputs.

Apriorit expert tip: Extend your existing secure SDLC practices to AI-specific threats. Traditional application security testing does not fully cover model-level vulnerabilities.

Step 7. Establish monitoring, change management, and audit readiness

AI Act compliance is not static. Once a system is deployed, the organization must monitor performance, manage updates responsibly, and maintain readiness for regulatory review.

During this step, your team needs to:

- Formalize change management for model retraining

- Define triggers for re-validation

- Implement post-deployment monitoring

- Make sure that documentation evolves alongside the system

Audit readiness requires maintaining structured evidence of compliance activities, validation results, and risk assessments.

Apriorit expert tip: Treat AI compliance as a lifecycle process and not a one-time release milestone. The biggest risks arise after deployment, when models are retrained, fine-tuned, or updated without reassessing risks and documentation. Introduce mandatory impact reviews, version tracking, and update-triggered validation checks to prevent silent compliance gaps.

Preparing existing AI systems for AI Act compliance is about introducing structured governance instead of rebuilding everything from scratch. Organizations that approach this systematically can reduce regulatory exposure while improving the reliability and maturity of their AI products.

Read also

Establishing Software Development Compliance: Challenges, Best Practices, and Requirements Overview

Build software that meets strict regulatory expectations. Our experts explain how to integrate compliance controls into your development lifecycle and minimize legal and operational risks. to enhance VAD platform security and performance by adding new capabilities to their platform and needed quality support for existing features.

AI Act compliance checklist

Before diving into formal compliance programs, it helps to step back and ask a simple question: If a regulator reviewed your AI system tomorrow, could you clearly explain how it works, how it was trained, and how it is controlled?

Use the checklist below as a quick self-assessment of alignment with the EU AI Act.

Table 4. EU AI Act compliance checklist

| Area | Key question | Status indicator |

|---|---|---|

| Risk classification | Have we formally classified the AI system under the AI Act risk categories? | ❌ / ⚠️ / ✅ |

| Intended use and scope | Are intended use cases clearly defined and documented? | ❌ / ⚠️ / ✅ |

| Data governance | Are training, validation, and testing datasets documented, traceable, and assessed for bias? | ❌ / ⚠️ / ✅ |

| Technical documentation | Do we maintain up-to-date technical documentation describing system design, limitations, and validation? | ❌ / ⚠️ / ✅ |

| Transparency obligations | Are users informed when interacting with AI and made aware of system limitations where required? | ❌ / ⚠️ / ✅ |

| Human oversight | Are oversight and intervention mechanisms defined where legally required? | ❌ / ⚠️ / ✅ |

| Post-market monitoring | Do we monitor system performance and reassess risks after significant updates? | ❌ / ⚠️ / ✅ |

| Conformity and evidence | Could we provide structured compliance evidence to regulators if requested? | ❌ / ⚠️ / ✅ |

If you answered ❌ or ⚠️ to most of the questions, your system may not yet meet key obligations under the EU AI Act. The earlier you identify these gaps, the easier and less costly it is to address them.

How Apriorit implements compliance-by-design for AI systems

At Apriorit, we approach AI compliance as a technical discipline embedded into architecture, development workflows, and security controls from day one. With us, you will get:

- Secure SDLC for AI systems. You’ll get development workflows aligned with regulatory requirements at every step of development. We integrate AI-specific threat modeling, structured validation, secure CI/CD pipelines, and documentation processes directly into your SDLC so compliance becomes part of everyday engineering.

- Expertise in regulated industries. You’ll benefit from our extensive experience delivering AI solutions in healthcare, pharmaceuticals, and other compliance-heavy environments. This means your AI solution will be developed with sector-specific regulations, sensitive data handling rules, and audit expectations in mind.

- AI, ML, and cybersecurity engineering. You’ll receive AI systems that are both accurate and resilient. We strengthen data pipelines, implement protection against adversarial manipulation, and reinforce infrastructure-level security controls to reduce operational and regulatory risks.

- ISO-certified development processes. Your project will be supported by structured, traceable development practices aligned with ISO-based quality and information security standards, helping you maintain audit readiness and governance consistency.

- Modernization of existing AI systems. If you already have AI products, we will help you upgrade them. This includes system inventory and classification, technical gap analysis, improved traceability, enhanced transparency mechanisms, and targeted security hardening, focusing on incremental, realistic improvements instead of full redesigns.

- AI consulting and readiness assessments. If you’re at an initial stage, we can start with structured AI readiness assessments, compliance gap analysis, risk evaluation, and architectural recommendations. This helps you define a practical roadmap before investing in large-scale development changes.

Our focus is on practical implementation: designing, upgrading, and strengthening AI systems so compliance requirements are reflected in architecture, data flows, validation processes, and operational controls. Here are some of our recent AI projects in heavily regulated industries:

Project 1. AI-powered LMS module for a global pharmaceutical company

A global pharmaceutical company needed an AI module to personalize training inside their existing LMS while maintaining strict data protection standards.

By partnering with Apriorit, the client received:

- AI-generated personalized learning paths that increased course completion rates by 35%

- A scalable architecture supporting 1,000+ concurrent users

- GDPR-aligned data processing and secure handling of employee information

- A production-ready module integrated into their regulated enterprise environment

Project 2. Custom AI chatbot for corporate research

A consulting firm required a secure AI chatbot capable of analyzing large volumes of internal research materials and providing accurate, context-aware responses to employees, all without exposing sensitive corporate data.

With our help, the client received:

- A private, corporate AI chatbot that enables secure summarization and content generation using internal documents

- Full data privacy: prompts and generated outputs remain isolated within the client’s infrastructure

- Stable performance with up to 15 simultaneous users, with tested scalability up to 50 users

Project 3. Custom NLP model for medical data analysis

An international medical research organization required a secure NLP solution capable of processing and analyzing scientific and medical reports with high accuracy.

Apriorit delivered:

- A custom NLP model achieving over 92% accuracy

- Secure processing of sensitive medical datasets

- Structured validation workflows aligned with research standards

- Automated extraction and summarization of PDF- and XML-based reports

Project 4. AI-based healthcare solution development

A US-based healthcare center partnered with Apriorit to build an AI system capable of detecting, tracking, and measuring ovarian follicles across ultrasound video frames — a task that traditionally requires extensive manual review.

Our team provided:

- An AI system with 90% precision and 97% recall for accurate follicle detection and measurement

- Automated ultrasound video analysis that significantly reduces doctors’ manual workload

- Technical documentation for submission to the United States Food and Drug Administration to support medical device certification

Apriorit’s AI development and cybersecurity services can support your transition toward compliant, secure, and future-proof AI systems.

Turn AI Act requirements into practical engineering solutions

Apriorit translates regulatory obligations into secure architectures, traceable data pipelines, and audit-ready AI systems.

FAQ

Does the EU AI Act apply to companies outside the European Union?

<p>Yes. The European AI Act applies not only to companies established in the EU but also to organizations outside the EU if their AI systems are placed on the EU market or their outputs are used within the EU.</p>

<p>This means that US, UK, and Asian companies may still fall under the Act if they:</p>

<ul><li>Offer AI-enabled products or services to EU users</li>

<li>Integrate AI into systems used by EU-based customers</li>

<li>Distribute software through EU partners or subsidiaries</li></ul>

Which AI systems are considered high-risk under the AI Act?

<p>High-risk AI systems are those that can significantly impact individuals’ safety, fundamental rights, or access to essential services. The classification depends on the intended purpose and use context, not just the technology itself.</p>

<p>For example, AI systems used in medical diagnostics, recruitment and CV screening, credit scoring, student admissions, biometric identification, or critical infrastructure management are typically considered high-risk.</p>

What technical documentation is required for AI compliance?

<p>The AI Act requires structured documentation that demonstrates how the system is designed, developed, validated, and monitored. Documentation must be detailed enough to allow regulators to assess compliance.</p>

<p>This typically includes:</p>

<ul><li>A clear description of the system’s intended purpose and functionality</li>

<li>Data governance and dataset management practices</li>

<li>Risk assessment and mitigation measures</li>

<li>Model testing, validation, and performance metrics</li>

<li>Post-deployment monitoring procedures</li></ul>

<p>For many companies, this means formalizing processes that may previously have been informal or only partially documented.</p>

How can existing AI products be adapted to meet EU AI Act requirements?

<p>Existing AI systems do not necessarily need a full rebuild, but they often require structured upgrades. The process usually begins with a gap analysis to identify where current practices fall short of regulatory expectations.</p>

<p>The goal is to align architecture, processes, and documentation with compliance requirements while preserving core product functionality.</p>

Does open-source AI software fall under the AI Act?

<p>Open-source AI software can fall under the EU AI Act depending on how it is used and distributed. The Act includes certain exemptions for open-source components, particularly when they are made available without commercial intent. However, when open-source AI is integrated into a commercial product or placed on the EU market, the organization deploying it is still responsible for compliance.</p>

<p>In other words, using open-source models or libraries does not automatically remove regulatory obligations. Companies remain accountable for how the AI system functions in practice, how it is deployed, and whether it meets applicable requirements under the AI Act.</p>