Despite all the benefits serverless platforms bring to application developers, the security of serverless applications is still a concern. This is because serverless infrastructure is managed by third-party service providers; developers have limited access to the security settings for serverless environments.

In this article, we list major security challenges and risks of serverless applications and explore best practices for improving the security of your serverless solution. This article will be helpful for developers who are considering using serverless technology for their projects and want to do so securely.

Contents:

Serverless applications and responsibilities in a serverless architecture

Serverless applications are cloud-based software built using serverless computing architecture — a type of architecture where an application runs in event-triggered stateless compute containers that are fully managed by service providers.

The most well-known serverless offerings are AWS Lambda, Microsoft Azure Functions, and Google Cloud Functions.

Sometimes, the term Function as a Service, or FaaS, is used as a synonym for serverless, which can be confusing. Among developers, there are two points of view: some developers consider these terms interchangeable; others disagree. Those who think these terms are not equivalent say that FaaS is only an implementation of a serverless architecture. The server-side logic in FaaS runs in stateless compute containers; however, it remains the app developer’s responsibility, just like in traditional architectures.

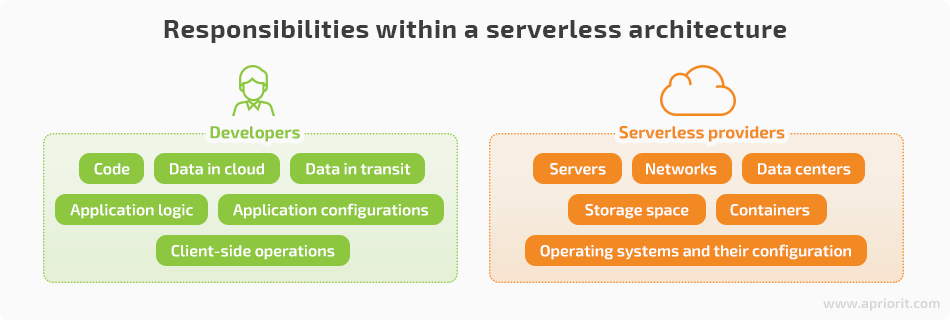

The segregation of responsibilities between developers and service providers is the main differentiator of serverless applications from traditional server-based solutions. Usually, serverless providers are responsible for data centers, networks, and their configuration. Meanwhile, developers take care of uploading the application code to the serverless platform and securing its functions, configurations, data, and application logic.

Security risks and vulnerabilities

Building solutions using rented servers means storing sensitive user data somewhere you don’t completely control. Thinking of serverless security is crucial, as data leaks can cause scandals and damage reputations.

There have been some well-known security breaches caused by attacks on cloud servers:

- In 2016, over 93 million voter registration records were compromised in Mexico. The reason was a poorly configured database illegally hosted on an Amazon cloud server outside of Mexico.

- The Timehop application suffered from an attack in 2018 in which the names and emails of 21 million users were compromised. The attacker abused Timehop admin credentials to access the application’s cloud environment.

- Records of more than 100 million Capital One customers were compromised in 2019. A cloud misconfiguration allowed a malicious actor to access credit card applications, social security numbers, and bank account numbers of the company’s customers.

Although serverless technology allows developers to eliminate the need for managing application servers and pass responsibility for some aspects of security to infrastructure providers, there’s no guarantee that these providers will ensure sufficient security.

Moreover, possible security vulnerabilities in web applications make them susceptible to application-level attacks such as:

- Cross-site scripting (XSS)

- Command/SQL injection

- Denial of service (DoS)

- Broken authentication

- Broken authorization

The problem is that, in most cases, serverless applications are vulnerable to the same attacks that may happen to traditional applications. Also, there can be other security challenges caused by the nature of the serverless architecture.

That’s why it’s especially important for those who are considering using serverless platforms to carefully read the terms of agreement with service providers. Here are a few essential things you should keep in mind when exploring a provider’s policy:

- Take your time to explore the terms of a serverless provider’s agreement, as these agreements are often difficult to comprehend at first sight. With a deep understanding of each clause of the agreement, you’ll be able to come up with a comprehensive security policy and avoid misunderstandings with the provider.

- Be attentive when choosing the region to store your application content. For instance, the AWS Customer Agreement specifies that Amazon secures the content of customers’ applications and won’t access it except as necessary to comply with the law or a binding order of a governmental body. This is why you should be careful when specifying regions in which to store your application’s content.

- Carefully check the security responsibilities of the service provider. If some security issues aren’t covered by the service provider, make sure your team can deal with them before adding them to your security strategy.

- Know your rights and security responsibilities from the beginning. Google Cloud Platform specifies rights and responsibilities in the Data Processing and Security Terms section, making the customer responsible for use of services and for providing additional security controls, securing account authentication credentials, and backing up data as appropriate.

Now let’s take a closer look at the critical security challenges that may appear in serverless applications.

Security challenges with serverless applications

Serverless computing is still quite a new technology, so handling it is more complicated than handling traditional software environments. Software developers and architects often lack the experience needed to ensure decent serverless app security.

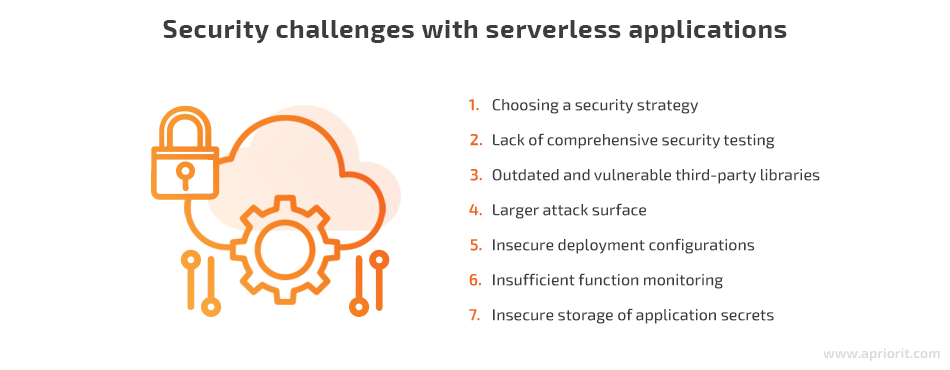

Below, we list seven major security challenges that may arise when creating serverless applications.

Let’s explore each of these challenges in detail.

Choosing the right security strategy

Application security gets trickier when it comes to cloud and serverless deployments. Thus, coming up with a relevant security strategy also becomes more challenging.

The first issue is that the development team has to rely on the service vendor to ensure the security of the solution. Before starting to collaborate with a service provider, you need to ensure they can maintain your application’s security at an appropriate level and protect your data and intellectual property from theft.

Another issue is that serverless vendors provide different levels of security and features to protect their customers’ projects. This means you have to discuss the choice of vendor with your security specialists first, then thoughtfully explore the terms of the agreement proposed by a particular vendor.

You should also consider that one day, you may choose multi-cloud deployments or shift your project to another serverless platform. You have to be careful when choosing serverless vendors and be attentive to the details of the agreements they offer.

Lack of comprehensive security testing

Security officers and testers often face additional challenges when testing the security of serverless solutions. Even more challenges arise when serverless applications interact with cloud storage, remote third-party services, databases, and backend cloud services.

To identify problems in serverless applications, QA engineers can use tools commonly used for other cloud solutions, but they also have to carefully analyze the source code itself. To review thousands of lines of code, QA engineers need patience, basic programming skills, and knowledge of web application security testing methodologies. Moreover, it can be challenging to find and hire a QA specialist with decent knowledge of and experience testing serverless applications.

Outdated and vulnerable third-party libraries

When you use a serverless platform, you’re most likely relying on third-party libraries — which often depend on additional libraries themselves — for your application to function. This is a conventional and helpful way to speed up development. However, libraries may also attract hackers who use their known and yet unknown vulnerabilities to start an attack.

Although third-party libraries are updated with new functionality and to prevent potential security vulnerabilities, these updates may not be fast enough for your new serverless application. There’s a risk that a third-party library you use could become outdated and could expose your customers’ private data.

Larger attack surface

The diversity of event sources used by serverless applications — APIs, cloud databases, HTTPS, cloud storage — extends the potential attack surface.

Usually, developers apply standard application layer protections such as web application firewalls and intrusion prevention and detection systems to inspect messages. However, in a serverless architecture, developers don’t have access to servers or operating systems. Thus, it’s impossible to deploy these traditional security layers.

One of the most important issues to consider is the risk of event injections. Serverless architectures are event-driven, which means that threat actors can find ways to trigger and invoke functions with untrusted user-supplied data. Also, as with functions in any software, serverless functions are prone to other injection attacks such as cross-site scripting and denial-of-service.

Insecure deployment configurations

To attract more customers, serverless providers offer various customization options and ready-to-go configurations. Although such solutions seem appealing, they can lead to some unobvious vulnerabilities when the settings aren’t managed correctly.

The biggest danger is that developers often rely too much on serverless providers, not paying enough attention to the default security settings of cloud storage configurations that may lead to misconfigured authentication and authorization processes and other security flaws. All these things may result in the exposure of confidential corporate information and a leak of user data.

Insufficient function monitoring

The absence of efficient event monitoring and logging in serverless apps also may lead to security concerns. Without robust live monitoring and error reporting, developers risk missing critical defects and not maintaining logs with essential information, such as which users have accessed sensitive data and when.

Although serverless providers take care of logging functionality, it’s often not enough. To achieve a decent level of security monitoring and provide a full audit log, a development team has to work on the logging logic. It should meet all the needs of the project: collect real-time logs from serverless functions and cloud services and push these logs to a remote security information and event management (SIEM) system.

Insecure storage of application secrets

In serverless applications, developers pack each function separately, which means that a single centralized configuration file can’t be used. Traditional methods for injecting secrets may not be applicable and may create security risks because serverless applications tend to be stateless. While developers can use various solutions to manage serverless secrets, not all of them are secure enough.

Leaving credentials unencrypted in configuration files is a bad idea, as any user who has permission to read these files will be able to access this sensitive data. Another critical issue is storing secrets as environment variables, as they also can be compromised by threat actors. To address this issue, consider using reliable secret management tools.

Best practices to enhance security in serverless applications

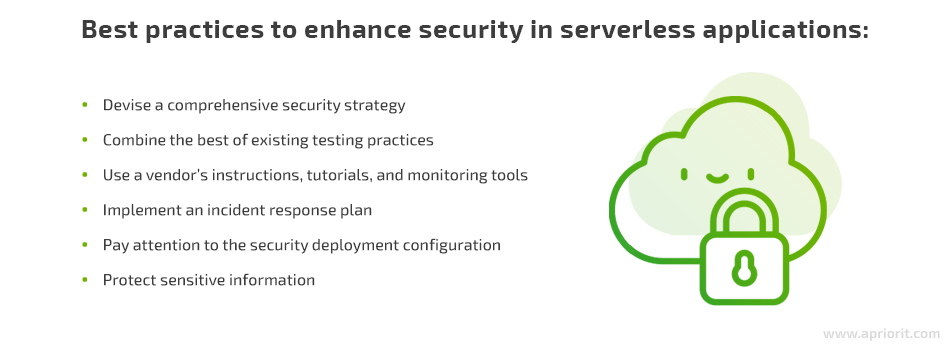

Since serverless architecture is a relatively new approach, it’s susceptible to the security issues we’ve mentioned. While serverless providers are working on eliminating these risks, you should follow best practices to eliminate those security risks that are your responsibility.

Devise a comprehensive security strategy

Consider all the risks and challenges your serverless application can face and create a robust security strategy to avoid major pitfalls right from the start.

Here are a few useful tips for improving your security strategy:

- Validate event and input data that comes from HTTP/HTTPS traffic to the serverless application.

- Explore your security policies and access permissions in detail.

- Consider implementing the principle of least privilege and giving each function minimum permissions.

- Perform static code analysis and conduct penetration testing to detect vulnerabilities.

Combine the best of existing testing practices

Serverless applications can be more challenging to test than traditional ones. Even basic methods and techniques like stress testing and fuzzing can make a big difference in serverless application security testing. Therefore, security engineers have to analyze the function code itself to identify flaws in business logic and improper use of APIs and data types.

Robust testing of authentication, permissions, and session management should minimize the risk of side-channel attacks, user hijacking, and information leakage. Statistical techniques, often used for performance tuning and scalability testing, can be applied to detect denial-of-service and penetration vulnerabilities.

Security engineers usually combine different testing techniques to check serverless applications for possible flaws and weaknesses:

- Interactive application security testing (IAST)

- Dynamic application security testing (DAST)

- Static application security testing (SAST)

Let’s explore the benefits and drawbacks of using these three approaches for testing the security of serverless applications:

| Testing approach | Pros | Cons |

| Dynamic application security testing | Provides testing coverage for HTTP interfaces | Can hardly be used to test serverless applications that interact with non-HTTP sources or backend cloud services |

| Static application security testing | Detects software vulnerabilities using data flow analysis, control flow, and semantic analysis | Can show false positive results because serverless applications contain multiple distinct functions that are stitched together using event triggers and cloud services. False negatives can appear if testing tools don’t consider specific constructs of serverless applications |

| Interactive application security testing | More accurately detects vulnerabilities in serverless applications compared to DAST and SAST | Can hardly be used if an application consumes input from non-HTTP sources. Can require an instrumentation agent to be installed on the local machine, which is not an option in serverless environments |

Use a vendor’s instructions, tutorials, and monitoring tools

Insufficient event monitoring and logging is a significant issue among serverless applications. To address this problem, leading cloud providers offer their own recommendations:

- Security Overview of AWS Lambda

- Google Infrastructure Security Design Overview

- IBM Guide for Cloud Security

- Oracle Cloud Infrastructure Security Guide and Announcements

You can also explore general guides and various tutorials for applying proper security logging. Although most of these were created for cloud servers and traditional applications, you can still apply some of their recommendations to your serverless software. For example, the OWASP Top 10 (2017) Interpretation for Serverless can be used to secure possible attack vectors and explore common attack scenarios.

You may also consider using monitoring tools that work tightly with serverless platforms. For example, you may use Dashbird, Rookout, or IOpipe for working with AWS lambda-based applications. SignalFx, a tool that ensures real-time visibility and performance monitoring for your functions, is capable of monitoring AWS Lambda, Google Cloud Functions, and Azure Functions. Stackdriver is a solution for monitoring Google Cloud Functions logs.

Implement an incident response plan

Lack of real-time incident response mechanisms in your serverless solution is a huge disadvantage for developers but a great benefit for attackers. Integrating an incident response plan is essential to detect early signs of an attack, resolve issues fast, and keep your serverless application secure.

First, you should explore guides and recommendations from your serverless provider on responding to security incidents. Here’s a list of several guides and documents — both from serverless vendors and other sources — that may help you create and implement an incident response plan:

- AWS Security Incident Response Guide

- Google Cloud Data incident response process

- Microsoft Azure Tutorial: Respond to security incidents

- NIST Computer Security Incident Handling Guide

- CISA National Cyber Incident Scoring System

- Hardening AWS Environments and Automating Incident Response for AWS Compromises

You can establish swift incident response by using the Volatility framework, TheHive Project, SIFT Workstation, and Sumo Logic.

Here are a few useful tips to create a comprehensive incident response plan:

- Identify the critical components of your application

- Perform regular security audits to find security weaknesses

- List and analyze all possible security incidents that can happen to your serverless application or have happened in the past

- Make a list of the incident response tools and mechanisms you can potentially use and pick those that meet your needs, work efficiently, and are affordable for your project

- Appoint team members that are responsible for handling security incidents

- Make sure to revise your incident response plan as needed

Pay attention to the security of deployment configurations

Although cloud providers offer various customizations and configurations to meet the specific needs of their customers, some of these default settings may not be secure enough. Here are several tips to avoid common security issues:

Secure event input to avoid malware injection. Input data has to be validated based on schemas and data transfer objects. Application algorithms have to check whether the data range, type, and length aligns with what’s expected.

Be careful when integrating third-party services within your functions, as you have minimal control and visibility into the data source and the scope of user-controlled input.

Secure cloud-based storage. Incorrectly configured cloud storage authentication and authorization mechanisms are common weaknesses that affect many cloud-based applications. To avoid storage-related security issues, explore the following guides from serverless providers:

- Security to safeguard and monitor your cloud apps (IBM)

- Best practices for Cloud Storage (Google Cloud)

- How can I secure the files in my Amazon S3 bucket? (AWS)

- Security recommendations for Blob storage (Microsoft Azure)

Follow secure coding guides, such as OWASP Web Application Security Guidance, to eliminate issues concerned with application code security at the early stages of software development.

Use only trusted libraries advised by cloud service providers to avoid known vulnerabilities. For example, for serverless applications, you may use the Function Development Kit, Python Lambda, and the Standard Library.

Protect sensitive information

You can also secure a serverless application by taking care of your sensitive data. Storing credentials in static files within the source code repository is not an option. Let’s explore several ways to protect sensitive information in serverless applications:

Encrypt all data. The Cloud Security Posture Repository has gathered detailed information about encryption and key management for Amazon Web Services, Microsoft Azure, and Google Cloud Platform.

Apply the principle of least privilege. Review roles and permissions given to users, third parties, and functions within the application and make sure these entities can access only the information and resources they need for legitimate purposes.

Use secure storage for credentials. Choose key vaults that are recommended by serverless providers and explore their guides to secure your sensitive data:

- Encrypting Environment Variables Client-Side in a Lambda Function

- Azure Key Vault documentation

- Manage service credentials for serverless applications (IBM)

Conclusion

Creating an application using a serverless architecture is a great way to reduce development costs and ensure the scalability of your solution. However, your development team has to keep potential security issues in mind right from the start.

Making sure that your serverless application is secure isn’t easy. Insecure deployment configurations, insufficient monitoring, and a lack of comprehensive security testing increase the risk of a security incident. However, these issues can be addressed by combining different types of testing techniques, following security coding guides, and securing event input to avoid malware injection.

If you’re planning to develop a serverless application, feel free to contact us. At Apriorit, we have a highly qualified virtualization and cloud computing team that’s ready to work with serverless projects of any complexity.