An object-relational mapper (ORM) is an essential part of any project that includes a database. It simplifies the maintenance and processing of data, allowing developers to focus on code. But each ORM framework has its pitfalls that you have to be aware of before implementing it.

In this article, one of our Apriorit experts discusses key issues we faced with Entity Framework in one of our .NET projects. He explains how to speed up materialization and SELECT, INSERT, UPDATE, and DELETE statements in Entity.

This article will be useful for developers who are looking for a library for their .NET projects or who are already working with Entity Framework.

Contents:

What is an ORM?

An object-relational mapper (ORM) is software responsible for mapping between databases and object-oriented programming languages. An ORM creates a virtual database scheme and allows a developer to manipulate data at the object level. A mapping shows how an object and its properties are associated with data in database tables. The ORM uses this information to manage the transformation of data between databases and objects.

Figure 1. An ORM manipulates data from a database as objects.

Using an ORM saves a developer a lot of time because it:

- Automates the processes of inserting, updating, and deleting data according to commands from an application

- Creates SQL queries automatically

- Simplifies updating, maintaining, and reusing code

- Enforces a Model–View–Controller pattern to structure code

On the negative side, ORM frameworks aren’t easy to learn. If you’re working with an extensive database, processing queries and editing data can take some time. Also, an abstract database can be a trap for inexperienced developers. Without knowledge of database operations, they may write unoptimized statements that slow down the database.

Need help with a .NET project?

Delegate development challenges to Apriorit professional engineers and get software that meets your technical requirements and business needs.

Entity Framework — a complex ORM library

Entity Framework is an ORM library that allows for working with databases using .NET objects. It was originally created in 2008 as a way to realize custom repository patterns (DbConnection, DbCommand, etc.) for ADO.NET. Entity Framework is mainly used by websites written in .NET.

Microsoft’s Entity Framework was created as a library for managing entities stored in a database management system (DBMS). As the name suggests, it focuses on and manages only separate entities. Another important element of the library is the DbSet class, which mimics a repository pattern.

Using Entity Framework gets complicated if you work with backend solutions that deal with large volumes of data. In this case, you can’t manipulate independent entities — you have to deal with datasets. For example, all SQL queries deal with datasets:

delete from "Table" where ... ; deletes objects that meet given requirements.

merge into "Table" using "Table2" on ... when matched then update ... not matched then insert ... ; merges data from two tables.

select * from "Table" where "Id" = 1 ; selects multiple objects with Id = 1. If the ID is the primary key, the set will consist of one element, but it’s still a set.

Despite its popularity and robust capabilities, Entity Framework is quite a heavy library that has several complex issues. Let’s discuss the obstacles we’ve faced while working with it.

Issues we’ve faced using Entity Framework

At Apriorit, we have developed and supported several complex .NET projects using Entity Framework. It’s an efficient tool for database management, but over the years we’ve identified several critical issues with this library:

- Slow processing of SELECT statements

- Poor data materialization

- Slow INSERT/UPDATE query processing with large volumes of data

- Issues with executing the DELETE statement

Let’s take a closer look at why these issues occur. In the third part of the article, I’ll talk about third-party libraries we’ve used to improve Entity Framework performance.

Read also

Entity Framework Database Schema Migrations: Types and Features

Ensure your project’s reliability throughout its evolution by establishing proper synchronization between an application’s data model and its database. Explore helpful database migration best practices and ways to overcome the limitations of the standard database migration tools from Apriorit’s experience.

Slow SELECT statement processing

The Entity Framework API is built on Language Integrated Query (LINQ), with a very small difference between client code and the SQL-translated code executed by the DBMS. Because of this, it has difficulties with processing the SELECT statement. For example, there’s a common performance issue with SELECT N+1 queries.

A SELECT N+1 query iterates through a collection of elements and executes an additional query for each element. This slows down database performance, especially if your database contains lots of records. The code for a SELECT N+1 query looks like this:

foreach(var entity in context.Entities)

{

if(entity.Field == 3)

{

Console.WriteLine("Entity :" + entity.Name);

}

}We wanted to get all the entities with Field == 3 and go through them in a cycle. Instead, we got one database round trip per entity.

A correct query should look like this:

foreach(var entity in context.Entities.Where(x=>x.Field == 3).ToArray())

{

Console.WriteLine("Entity :" + entity.Name);

}In this code sample, we’ve added two important things:

- An ==3 rule which defines that filtration must be done on the server side

- ToArray, which shows that the query should be performed on the client side

This way we can process the query without interacting with a server, speeding up data selection.

Poor data materialization

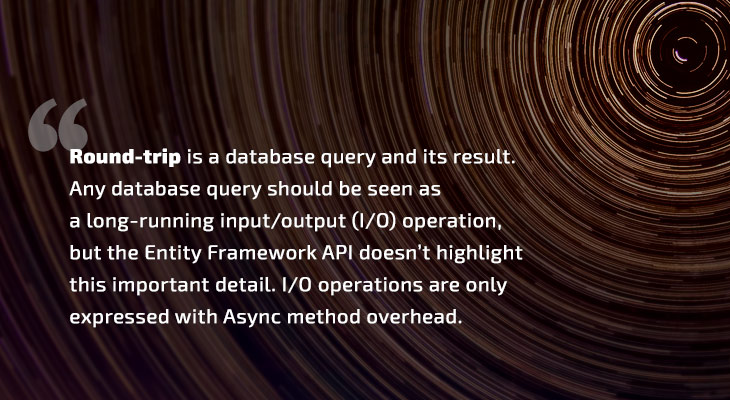

Materialization is an important Entity Framework rule that refers to getting client entities based on data from a database. During materialization, the framework performs a round trip query, mapping, and entity design operations.

This rule is also poorly represented in the Entity Framework API. In general, it looks like this: if you manipulate the IQueryable<T> object, it’s a LINQ request, not materialization. For example, the query

context.Entities.Where(x=>x.Field == 3)

returns an IQueryable object. Any SELECT provides the same result. But if you work with the IEnumerable interface, the query result will be the materialized data.

This rule has two issues:

- The AsQueryable method (which can be applied to any IEnumerable) is simply hiding that IEnumerable is already materialized. This doesn’t cause other major issues but can lead to materializing existing data with the ToArray method.

IQueryable is a direct successor of IEnumerable. Because of this, you can pass an unmaterialized LINQ request to the method waiting in the memory of IEnumerable. Materialization doesn’t happen during the cast, and our method goes back to the SELECT N+1 issue. The foreach query from the example above doesn’t work because it waits for IEnumerable but gets IQueryable instead.

Related project

Developing a Custom MDM Solution with Enhanced Data Security

Discover how building an MDM solution helped our client expand their services, maintain the required level of data security, release the first 5,000 tablets, and distribute the devices among their customers.

Slow INSERT/UPDATE query processing

An INSERT query runs slowly when you need to add a lot of data to a database. In order to speed up this process, you can add the following code to the configuration:

context.Configuration.AutoDetectChangesEnabled = false;

foreach(var data in datas)

{

context.Data.Add(data)

}

context.SaveChanges()Pay attention to two key elements here:

- The SaveChanges function is a round trip call for the INSERT statement. The Entity Framework API shows us that we need to go into the database when we’ve cut down the amount of received data only to what we actually need to change. Also, it’s noteworthy that SaveChanges is called after all the data is added to the context. It’s hard to imagine a task that requires calling SaveChanges for each record.

- Automatic change detection should be disabled. There’s a ChangeTracker mechanism for detecting changes in Entity Framework. ChangeTracker is part of the DbContext class, which stores all entities requested or added by it.

Here’s an example of ChangeTracker at work:

Say we have a UI application that interacts directly with a database using Entity Framework. When a user opens an entity for editing, this entity is requested from the DBMS, added to the context, and bound with the UI. A user edits the entity fields and calls SaveChanges by clicking the Save button. ChangeTracker compares the previously saved entity fields with the new ones and generates an UPDATE query to update the fields altered by the user. Entity Framework performs this query under the hood with no interaction with the client code.

ChangeTracker is also involved in the process of inserting data to a database by verifying that an entity didn’t previously exist in the context. This leads to O(log(n^2)) algorithm time complexity for each insert.

Issues with executing the DELETE statement

Deleting an entity or multiple entities is a major issue in EntityFramework. The only way to do it is by using the DbSet<T>.Remove(T entity) method. Usually, the DELETE statement looks like this:

{

var entities = _dbContext.Set<tentity>();

var entitiesToRemove = entities.Where(predicate).ToList();

foreach (var entity in entitiesToRemove)

{

entities.Remove(entity);

}

await _dbContext.SaveChangesAsync();

}We select all entities that match a predicate and then have to delete them one by one. It’s possible to speed up the process with a primary key for deleting the entity. In this way, we can delete an entity without querying it from the database:

var host = this.db.Hosts.Attach(new Host() { Id = id });

this.db.Entry(host).State = EntityState.Deleted;

this.db.SaveChanges();A primary key works the same way for the UPDATE statement:

var rule = new UbaRule { Id = ruleId, Deleted = true };

_dbContext.Rules.Attach(rule);

_dbContext.Entry(rule).Property(x => x.Deleted).IsModified = true;

_dbContext.SaveChanges();We can also delete entities without a query to the DBMS by manipulating a connection between objects:

internal void RemoveClientFromGroupImpl(int clientId, int groupId)

{

var client = new Client() { Id = clientId };

var group = new ClientGroup() { Id = groupId };

client.Groups.Add(group);

this.db.Clients.Attach(client);

client.Groups.Remove(group);

client.GroupSettingsType = GroupPermissionSettings.Custom;

this.db.SaveChanges();

}Nevertheless, if we need to delete an entity that matches a predicate, we can’t do it using Entity Framework tools. In this case, we need to use third-party libraries.

Read also

Modernizing Legacy Software without Refactoring Code Using .NET Standard

Find out why you need to modernize legacy applications and what role .NET Standard plays in the .NET ecosystem. Apriorit experts share tips on how to make your project cross-platform and allow support for modern features by migrating it to .NET Standard.

Third-party libraries for Entity Framework

Despite the issues we’ve discussed, Entity Framework is a very useful library for .NET projects. At Apriorit, we use third-party libraries to improve its performance.

Developers all over the world have created lots of additional libraries and workarounds for Entity Framework. We’ve chosen the Entity Framework.Utilities and RefactorThis.GraphDiff libraries for our projects because they’re easy and fast to implement. We’ve also examined the possibility of using NHibernate and Dapper. However, NHibernate looks overengineered because of the Hibernate Query Language and drivers, and Dapper doesn’t create SQL queries, and our projects have used a DBMS and SQL dialects.

Let’s see how to use Entity Framework.Utilities and RefactorThis.GraphDiff to speed up the performance of Entity Framework.

Entity Framework.Utilities

The EntityFramework.Utilities library speeds up create–update–delete (CUD) operations. In our projects, we’ve used it to solve the issue of deletion and updating by queries and deletion by a predicate. Using this library, we can delete entities with the following code:

EFBatchOperation.For(activityDatabase, activityDatabase.AlertActivitiesRel)

.Where(x => x.AlertId == alertId)

.Delete();

EFBatchOperation.For(activityDatabase, activityDatabase.AlertTerminalFunctionsRel)

.Where(x => x.AlertId == alertId)

.Delete();Here we can see a clear manipulation of a set:

- The first line is the context and the table

- The second line is the predicate

- The third line is the required action

UPDATE by query looks similar:

EFBatchOperation.For(this.activityDatabase, this.activityDatabase.Sessions)

.Where(x => ids.Contains(x.Id))

.Update(x => x.AlertLevel, x => upd.Key);The author of this library implemented only MS SQL. It’s possible to use this library with other DBMSs by implementing QueryProviders. In our projects, we’ve used Firebird and PostgreSQL.

Read also

Combining Clean Architecture and CQRS Principles in a .NET Core Application: A Practical Example

Improve your application’s code by combining principles of the Clean Architecture and Command and Query Responsibility Segregation (CQRS) approaches. Explore a practical example of doing so from Apriorit’s expert .NET developers.

RefactorThis.GraphDiff

Object graph manipulation is impossible in Entity Framework. RefactorThis.GraphDiff solves the object graph manipulation issue for REST-based servers.

Entity manipulation is a key aspect of RESTful services. For example, if you need to update an existing entity, you have to send an entity (including fields and collections with changes) to a database, and the server has to update it as is.

In Entity Framework, ChangeTracker manages entity fields, but it doesn’t work with collections. Because of this, we’ve come up with workarounds by looking for all of the added, deleted, or changed descendant entities across the inclusion graph.

Before we employed the RefactorThis.GraphDiff library, updating data in our projects looked like this:

public async Task UpdateSecretAsync(BaseSecret secret)

{

var oldSecret = await _managedDatabase.Secrets.FirstOrDefaultAsync(s => s.Id == secret.Id);

if (oldSecret.Type != secret.Type) // due to BaseSecret.Type being discriminator property.

{

_managedDatabase.Secrets.Remove(oldSecret);

_managedDatabase.Secrets.Add(secret);

}

else

{

var oldPermissions = await _managedDatabase

.Permissions

.Where(p => p.SecretId == secret.Id)

.ToListAsync();

var oldPermissionsToRemove = oldPermissions.Where(p => !secret.Permissions.Select(per => per.Id).Contains(p.Id));

_managedDatabase.Secrets.AddOrUpdate(secret);

_managedDatabase.Permissions.RemoveRange(oldPermissionsToRemove);

foreach (var p in secret.Permissions)

{

p.SecretId = secret.Id;

_managedDatabase.Permissions.AddOrUpdate(p);

}

}

await _managedDatabase.SaveChangesAsync();

}After implementing this library, the same operation is shorter and simpler:

public async Task UpdateSecretAsync(BaseSecret secret)

{

var oldSecret = await _managedDatabase.Secrets.FirstOrDefaultAsync(s => s.Id == secret.Id);

if (oldSecret.Type != secret.Type) // due to BaseSecret.Type being discriminator property.

{

_managedDatabase.Secrets.Remove(oldSecret);

_managedDatabase.Secrets.Add(secret);

}

else

{

_managedDatabase.UpdateGraph(secret, map => map.OwnedCollection(s => s.Permissions));

}

await _managedDatabase.SaveChangesAsync();

}Using Entity Framework.Utilities and RefactorThis.GraphDiff, we can speed up CUD operations for large amounts of data. RefactorThis.GraphDiff also helps us manipulate object graphs, which is impossible with EF out of the box. Both libraries improve overall performance and make a developer’s life easier.

Conclusion

In this article, we’ve discussed what Entity Framework is and dived deep into common issues with materialization and SELECT, INSERT, UPDATE, and DELETE statements. These operations can significantly slow down work with Entity Framework, especially when dealing with an extensive database. We’ve also shown you a way to speed up this library using third-party libraries.

At Apriorit, we have experience working with Entity Framework, developing custom .NET software, and creating enterprise data management solutions. Our engineers are ready to assist you with projects of any complexity and solve non-trivial tasks.

Looking for .NET developers?

Entrust your development activities to Apriorit expert engineers to overcome technical challenges and achieve desired results.